Command Palette

Search for a command to run...

When Multimodal Computing Begins to Take Off: MiniCPM-o-4.5, With Only 9 Bytes, Covers real-time Image Understanding and Text Generation; vLLM Omni Simultaneously Supports high-throughput Deployment and service-oriented Architecture for Both Text and Multimodal models.

At this critical juncture where multimodal large models are transitioning from "usable" to "easy to use," parameter size, inference cost, and deployment barriers are becoming as important as model capabilities. OpenBMB's latest release, MiniCPM-o-4.5,Omni's full-modal capability is built using only 9B parameters, finding a better solution between lightweight and high performance.

MiniCPM-o-4.5 employs a unified architecture to jointly model and generate outputs from multimodal inputs such as text and images, emphasizing the synergistic optimization of cross-modal alignment capabilities and inference efficiency. Its 9-byte model size allows for inference deployment on mainstream consumer GPUs, making it more engineering-friendly in terms of memory usage and response latency compared to large-scale closed-source models.

at present,HyperAI's official website is now live."MiniCPM-o-4_5: Wallfacer Intelligence Open Source Full-Duplex Full-Modal Model"Come and try it~

Online use:https://go.hyper.ai/iOGzO

A quick overview of hyper.ai's official website updates from February 24th to February 27th:

* High-quality public datasets: 3

* High-quality tutorials: 14

* Popular encyclopedia entries: 5

Visit the official website:hyper.ai

Selected public datasets

1. THINGS-EEG EEG Dataset

THINGS-EEG is an electroencephalogram (EEG) dataset for object cognition research, released by the National Institute of Mental Health of the National Institutes of Health (NIH), the Max Planck Institute for Human Cognition and Brain Sciences in Germany, and the University of Giessen Medical School, among other institutions. It records the EEG activity of 50 subjects while viewing images of objects, and is used to analyze the temporal dynamics and cognitive representations of object processing.

Direct use:https://go.hyper.ai/kqejl

2. THINGS-MEG magnetoencephalography dataset

THINGS-MEG is a magnetoencephalography (MEG) dataset for object cognition research, released by the National Institute of Mental Health of the National Institutes of Health in the United States, the Max Planck Institute for Human Cognition and Brain Sciences in Germany, and the University of Giessen Medical School, among other institutions. It records millisecond-level electromagnetic brain activity when subjects view images of objects, and is used to analyze the temporal dynamics of object processing.

Direct use:https://go.hyper.ai/eBKWI

3. THINGS-fMRI functional magnetic resonance imaging dataset

THINGS-fMRI is a high-density functional magnetic resonance imaging dataset for object cognition research, jointly released by the National Institute of Mental Health of the National Institutes of Health in the United States, the Max Planck Institute for Human Cognition and Brain Sciences in Germany, and the University of Giessen Medical School, among other institutions. It aims to systematically characterize the human brain's visual and semantic representation of objects in the real world.

Direct use:https://go.hyper.ai/CRbiA

Selected Public Tutorials

This week, we have compiled 3 types of high-quality public tutorials:

* OCR Tutorials: 4

* Multimodal tutorials: 6

* Large Language Model Tutorial: 4 parts

OCR Tutorial

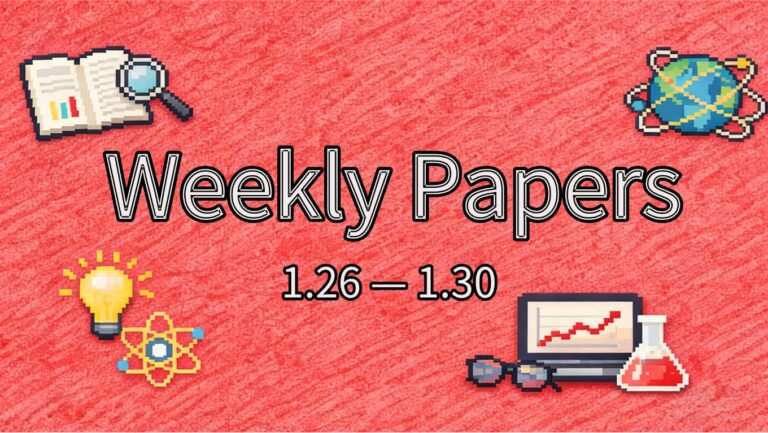

1. GLM-OCR Lightweight Multimodal OCR Recognition System

GLM-OCR is a lightweight, multimodal OCR model (0.9B) open-sourced by Zhipu AI in February 2026, focusing on high-precision text recognition and structured parsing in complex document scenarios. Its core advantages are "small size, high precision, and easy deployment." Based on a GLM-V encoder-decoder multimodal architecture, it integrates the self-developed CogViT visual encoder and RLHF optimization. It topped the state-of-the-art (SOTA) benchmark with a score of 94.62 in the OmniDocBench V1.5 benchmark, achieving performance close to the Gemini-3-Pro. It is suitable for various scenarios such as office document parsing, educational and scientific formula recognition, government and financial document verification, and code snippet extraction.

Run online:https://go.hyper.ai/kgb3n

2. PaddleOCR-VL-1.5: Local OCR based on vLLM

PaddleOCR-VL-1.5 is one of the multimodal OCR models in the PaddleOCR series released by the PaddlePaddle team. It provides stronger text recognition and layout understanding capabilities for complex document scenarios (invoices, contracts, papers, scanned documents, etc.). This tutorial uses the OpenAI-compatible interface of vLLM to connect to this model, realizing the complete link of uploading images and returning recognition results. With its 0.9B parameter count, it achieves a new-generation accuracy of 94.5% on OmniDocBench v1.5.

Run online:https://go.hyper.ai/cea6x

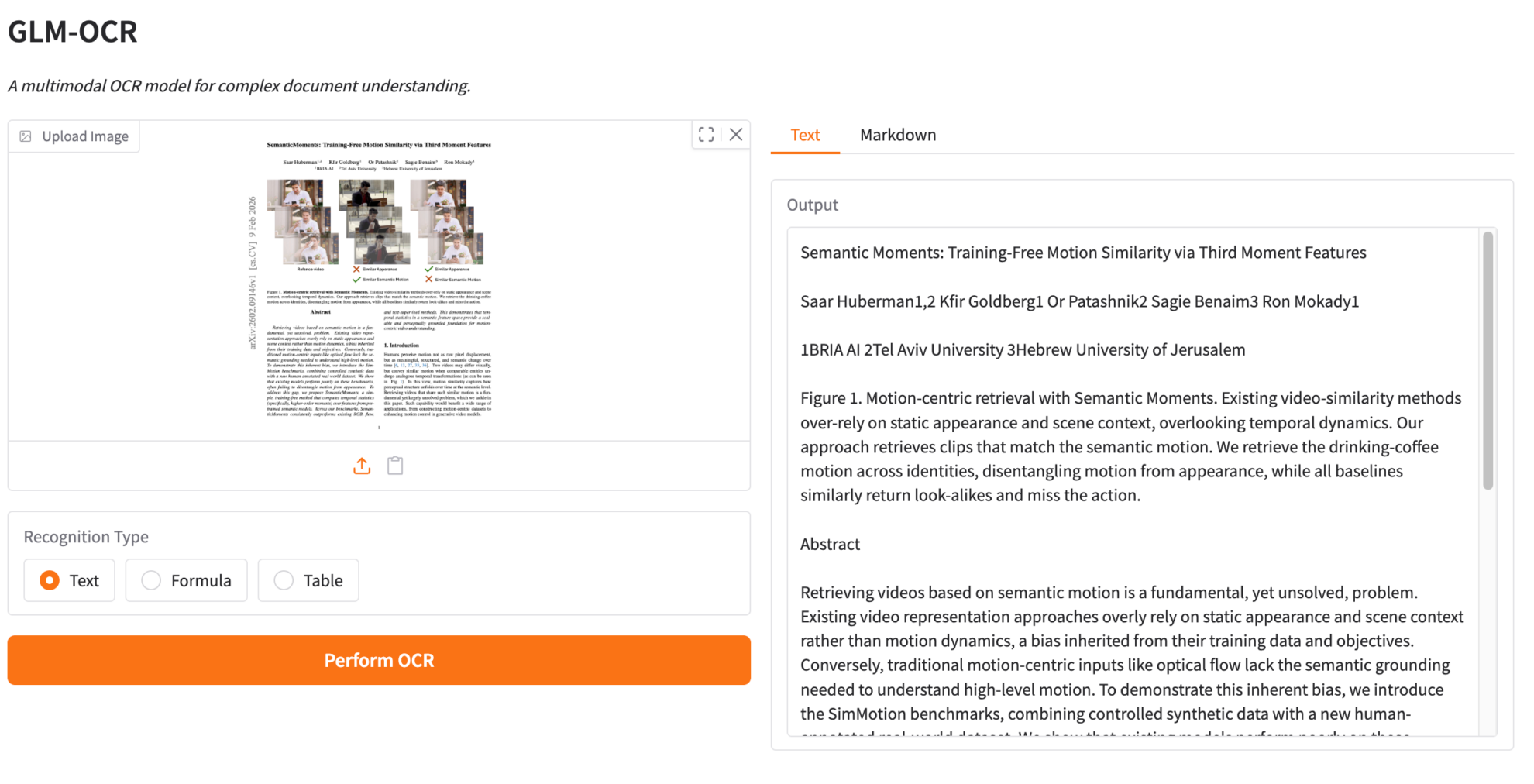

3.LightOnOCR-2-1B Lightweight, High-Performance End-to-End OCR Model

LightOnOCR-2-1B is the latest generation end-to-end visual language model from LightOn AI. As the flagship version in the LightOnOCR series, it unifies document understanding and text generation in a compact architecture, boasts 1 billion parameters, and can run on consumer-grade GPUs. This model employs a Vision-Language Transformer architecture and incorporates RLVR training technology, achieving extremely high recognition accuracy and inference speed. It is specifically designed for applications requiring the processing of complex documents, handwritten text, and LaTeX formulas.

Run online:https://go.hyper.ai/cLSj5

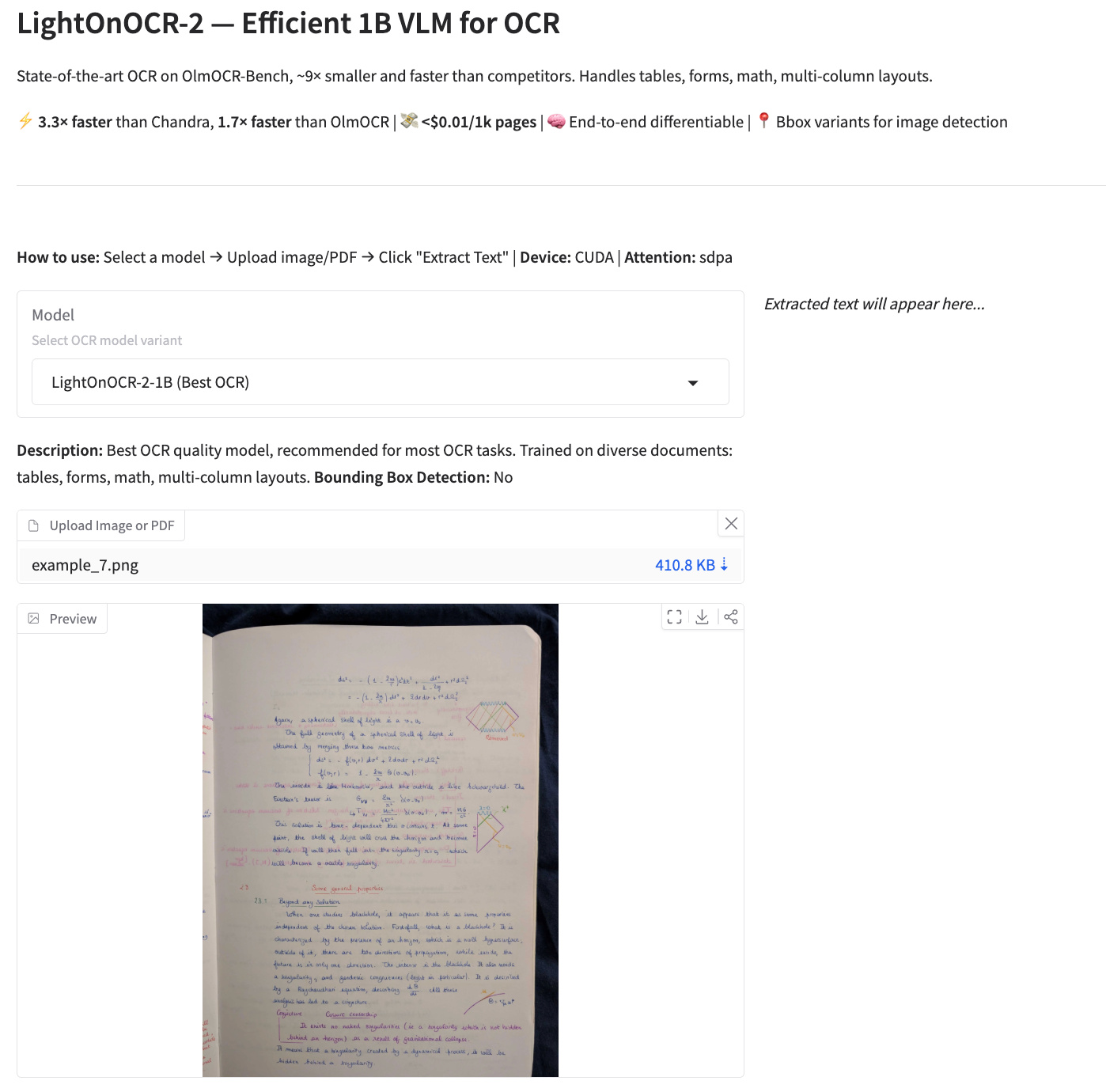

4. DeepSeek-OCR 2 Visual Causal Flow

DeepSeek-OCR 2 is the second-generation OCR model launched by the DeepSeek team. By introducing the DeepEncoder V2 architecture, it achieves a paradigm shift from fixed scanning to semantic reasoning. The model employs causal stream querying and a dual-stream attention mechanism, dynamically rearranging visual tokens to more accurately recreate the natural reading logic of complex documents. In the OmniDocBench v1.5 benchmark, the model achieved a comprehensive score of 91.09%, a significant improvement over its predecessor, while also significantly reducing the repetition rate of OCR recognition results, providing a new path for building a full-modal encoder in the future.

Run online:https://go.hyper.ai/iOGzO

Multimodal Tutorial

1. MiniCPM-o-4.5: Wallfacer Intelligence's open-source full-duplex, full-modal model

MiniCPM-o-4.5 is a 9B-parameter full-modal flagship model open-sourced by Facewall Intelligence and the Tsinghua University Natural Language Processing Lab in February 2026. It employs an end-to-end architecture using siglip2, whisper, cosyvoice2, and qwen3-8b. As the industry's first model to support "real-time free dialogue," it achieves full-duplex interaction – allowing users to simultaneously see, hear, and speak, breaking away from the traditional turn-based "walkie-talkie" mode. This model boasts leading visual understanding capabilities, hyper-humanoid speech generation capabilities, and speech cloning capabilities. It supports proactive interaction and real-time streaming media processing and can run on edge devices. It is compatible with various domestically produced chips, such as ascend and Hygon, and can be efficiently deployed using frameworks such as llama.cpp and vLLM.

Run online:https://go.hyper.ai/iOGzO

2.Deploying Qwen-Image-Edit using vLLM-Omni

Qwen-Image-Edit is a versatile image editing model released by Alibaba. This model possesses dual capabilities in semantic and visual editing, enabling both low-level visual appearance editing (such as adding, deleting, or modifying elements) and high-level visual semantic editing (such as creating IPs, rotating objects, and transferring styles). The model supports precise editing of both Chinese and English bilingual text, modifying text within images while preserving the original font, size, and style.

Run online:https://go.hyper.ai/4w6XA

3. Step3-VL-10B: Multimodal Visual Understanding and Graphical Dialogue

Step3-VL-10B is an open-source visual language foundation model released by the StepFun team, designed specifically for multimodal understanding and complex reasoning tasks. This model aims to redefine the balance between efficiency, reasoning ability, and visual understanding quality, and is suitable for multimodal models with a limited parameter size. Despite its small parameter size, this model demonstrates superior performance in visual perception, complex reasoning, and human instruction alignment. It consistently outperforms models of similar size in multiple benchmarks and rivals models with 10 to 20 times more parameters on certain tasks.

Run online:https://go.hyper.ai/RqTTW

4. Deploy Qwen-Image-2512 using vLLM-Omni

Qwen-Image-2512 is a foundational text-to-image model in the Qwen-Image series, primarily designed for high-quality image generation and complex multimodal content expression. Its focus is on enhancing the overall realism and usability of generated images. Portrait generation significantly improves naturalness, with facial structure, skin texture, and lighting relationships more closely resembling realistic photographs. In natural scenes, the model can generate more detailed terrain textures, vegetation details, and high-frequency information such as animal fur. Its text generation and typography capabilities have also been improved, enabling more stable presentation of readable text and complex font styles.

Run online:https://go.hyper.ai/JMmhs

5. TurboDiffusion: Image and Text-Driven Video Generation System

TurboDiffusion is a high-efficiency video diffusion generation system developed by a team from Tsinghua University in December 2025. Based on the Wan2.1 architecture for higher-order distillation, the system aims to solve the pain points of slow inference speed and high computational resource consumption in large-scale video models, thereby achieving the goal of generating high-quality videos with minimal steps.

Run online:https://go.hyper.ai/VvyVZ

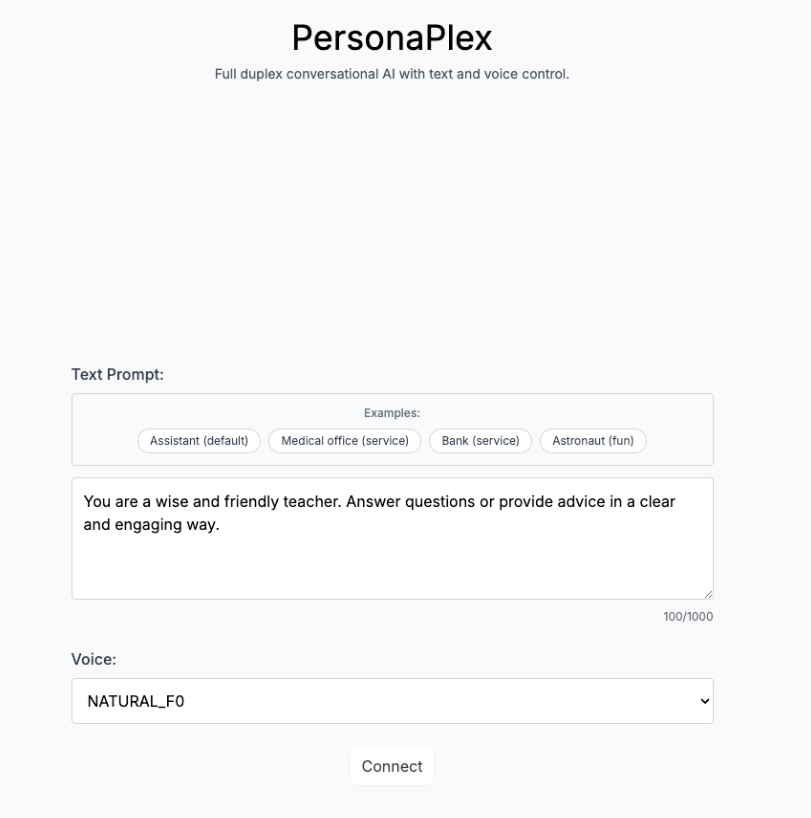

6. Personaplex-7B-v1: Real-time dialogue and character-customized voice interface

PersonaPlex-7B-v1 is a 7 billion parameter multimodal personalized dialogue model released by NVIDIA. It is designed for real-time voice/text interaction, long-term persona consistency simulation, and multimodal perception tasks, aiming to provide an immersive role-playing and multimodal interaction demonstration system with millisecond-level response speed.

Run online:https://go.hyper.ai/ndoj0

Large Language Model Tutorial

1.llama.cpp+Open WebUI deploy Qwen3-VL-8B-Instruct-GGUF

Qwen3-VL-8B-Instruct-GGUF offers a variety of accurate language model variants and a dedicated MMPROJ visual encoder. These models are compatible with tools such as llama.cpp and Ollama, and are well-suited for a wide range of hardware, including CPUs, NVIDIA GPUs, Apple silicon, and Intel GPUs. Qwen3-VL-8B-Instruct-GGUF explicitly distinguishes between the language and visual components in the GGUF format. This allows developers the flexibility to choose the quantization level specific to their hardware, achieving acceptable response times even in resource-constrained CPU environments, while unlocking more performance on systems equipped with GPUs.

Run online:https://go.hyper.ai/EKryC

2. Jacobi Forcing: A fast and accurate causal parallel decoding technique

Jacobi Forcing is a novel training technique introduced by Hao AI Labs that transforms large language models (LLMs) into native causal parallel decoders. By training the model to process noisy future blocks along its own Jacobi decoding trajectory, this technique solves the matching problem from AR to diffusion models while maintaining the integrity of the causal autoregressive backbone structure.

Run online:https://go.hyper.ai/fIad4

3.vLLM+Open WebUI Deployment of GLM-4.7-Flash

GLM-4.7-Flash is a lightweight multimodal inference model that balances high performance and high throughput, natively supporting Chained Thinking (CoT), tool calls, and agent functionality. GLM-4.7-Flash employs a Hybrid Expert (MoE) architecture, leveraging sparse activation mechanisms to significantly reduce the computational cost of each inference while maintaining the expressive power of large models.

Run online:https://go.hyper.ai/a2IN3

4.vLLM+Open WebUI Deployment of LFM2.5-1.2B-Thinking

LFM2.5-1.2B-Thinking is an edge-optimized hybrid architecture model. As a version in the LFM2.5 series specifically optimized for logical inference, it unifies long sequence processing and efficient inference capabilities within a compact architecture. This model boasts 1.2 billion parameters and can run smoothly on consumer-grade GPUs and even edge devices. Employing an innovative hybrid architecture (linear dynamic system + attention), it achieves extremely high memory efficiency and throughput, designed specifically for scenarios requiring real-time on-device inference without sacrificing intelligence.

Run online:https://go.hyper.ai/1XTsV

Community article interpretation

1. A European research team has proposed SeaCast, a high-resolution regional ocean forecasting model that can provide 15-day forecasts in just 20 seconds.

Irregular land-sea distribution, complex lateral boundary conditions, and the need for detailed characterization of vertically stratified variables make existing global-scale ocean AI models difficult to directly adapt to regional tasks. To address this, a joint research team comprised of the University of Helsinki (Finland), the Mediterranean Climate Change Research Center, and the University of Salento (Italy) developed SeaCast, a graph neural network model specifically designed for regional ocean forecasting. It can complete a 15-day forecast across 18 vertical levels within a 1/24° grid in just 20 seconds on a single GPU.

View the full report:https://go.hyper.ai/kRXnE

2. Cornell University proposes an innovative AI framework to decode the chemical mechanism of highly conductive lithium-ion electrolytes, achieving a prediction success rate exceeding 80% for %.

Salt-solvent chemistry underpins the electrolyte behavior in most lithium-ion battery systems, but its rational design is constrained by a vast chemical space encompassing countless combinations and nonlinear structure-performance coupling relationships. This problem is further exacerbated by sparse and unevenly distributed experimental data, hindering the generalization ability of models. A research team from Cornell University has developed a robust, interpretable, and data-efficient framework, SCAN, for modeling and interpreting salt-solvent chemistry. This framework effectively handles long-tailed data and captures the complete spectrum of salt-solvent formulations.

View the full report:https://go.hyper.ai/OrHIt

3. A new method for predicting battery life, proposed by the University of Michigan and others, reduces the verification cycle by 40 times; "discovery learning" saves evaluation time for the 98%.

Accurate and efficient battery cycle life prediction is crucial for the research and large-scale application of next-generation batteries, directly determining their reliability, safety, and total lifecycle cost. Recently, experts from research institutions such as the University of Michigan innovatively proposed a scientific machine learning method called "Discovery Learning (DL)," which organically integrates active learning, physically constrained learning, and zero-shot learning to construct a human-like reasoning closed-loop learning framework. Under conservative assumptions, compared to industrial-grade battery life verification processes, discovery learning can achieve a 981 TP3T evaluation time saving and a 951 TP3T energy saving, shortening the verification cycle from approximately 1,333 days to 33 days.

View the full report:https://go.hyper.ai/28W2g

4. Paper Summary | Over 100 Key AI for Science Achievements: A Quick Overview of Technological Innovations by 2025

Over the past year, the relationship between AI and scientific research has undergone a profound and quiet transformation. By 2025, AI for Science will no longer be just scattered technological applications, but will evolve into a clear, systematic, and reusable path for scientific research and innovation. AI will no longer be just a tool, but is becoming part of the research paradigm. HyperAI has compiled papers from multiple fields, including medical health, materials chemistry, meteorological research, and astronomy, to facilitate quick searching and review for readers with different backgrounds.

View the full report:https://go.hyper.ai/FLJGD

Popular Encyclopedia Articles

1. Reverse sorting combined with RRF

2. Kolmogorov-Arnold Representation Theorem

3. Large-scale multi-task language understanding MMLU

4. BlackBox Optimizers

5. Class-conditional probability

Here are hundreds of AI-related terms compiled to help you understand "artificial intelligence" here:

The above is all the content of this week’s editor’s selection. If you have resources that you want to include on the hyper.ai official website, you are also welcome to leave a message or submit an article to tell us!

See you next week!